Liking AI was hard for me. This post is about how I finally learned to embrace it, and how you can too.

There's a lot in the AI-revolutionizes-work narrative that smells like bullshit. The promises of the brave new LLM-powered world feel wildly inflated. It kind of resembles sex in high school: everybody says they're doing it, but for many it's really more talk than action.

Still, the hype made me nervous. Unlike past tech hype cycles (ahem, blockchain), people I respect are reporting serious productivity gains.

Meanwhile, my experience was mostly powerful autocompletion, information search, and error-prone, Tasmanian-devil agents. Helpful to an extent, but far from the 10x productivity claims from AI evangelists. It felt like I was on training wheels while the cool kids were doing no-hands wheelies.

It made me mad.

I felt inferior and superior at the same time: inferior because I wasn't getting the gains others were; superior because I knew they were wrong and foolishly thought they were doing work better now. Hell, there were even studies suggesting AI slows developers down.

But I couldn't help feeling I was missing out.

Luckily, my company is very pro-AI and has an active knowledge-sharing culture, so I decided to lean in.

Getting There

First I had to hype myself up. I found Nate B. Jones to be a resonant spokesman for why I should dive into this headfirst. Here's one of my favorites. It made me want to ship more and think more strategically.

Then I had to confront a simpler truth: I like coding.

I like the artisan aspect of it. I like solving problems and writing and polishing code. It's satisfying, like doing the NYT Games in the morning.

So whenever I prompt AI and it comes up with slop, it's frustrating. It feels like wasted time; I could've just done the work myself, the old-fashioned way.

Two observations helped me clarify things:

First, AI fatigue is real. AI makes it easy to lose flow. Instead of thinking deeply about the problem, it's easier to prompt the model and review its output. Your role shifts toward something of a Tech Lead instead of IC. Meanwhile, tools change seemingly on a weekly basis. It's exhausting to keep up with all that.

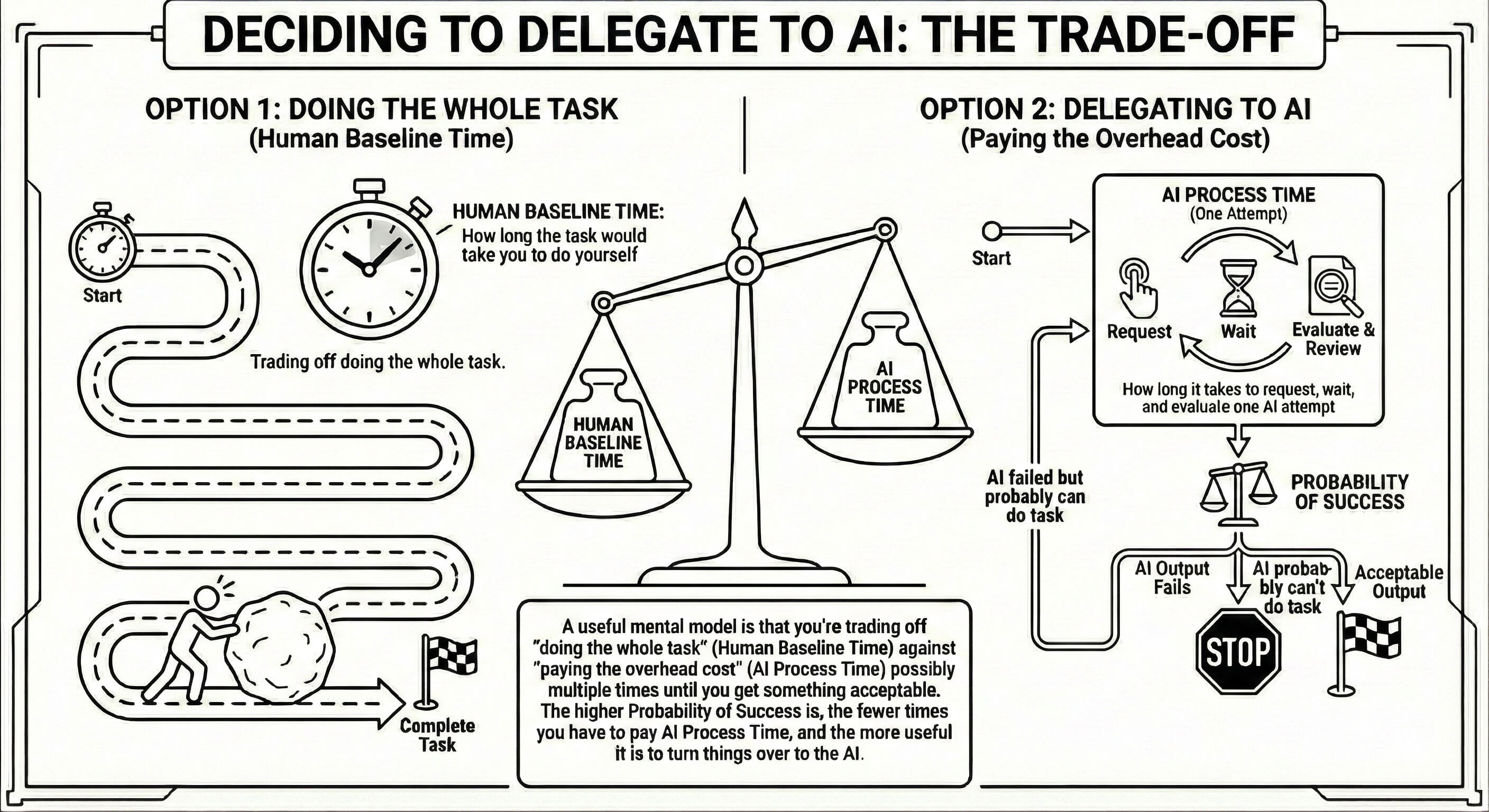

Second, working with AI boils down to simple math.

If a task takes N hours manually, and each AI attempt takes X hours and you need Y attempts, AI wins when:

X * Y < N

However, there's a catch: if the gains are tiny, it's really unsatisfying for the human operator (i.e., me). I lose the fun problem-solving and only gain a tiny productivity bump. It's not worth it — most of us probably wouldn't switch jobs for a small pay raise if the job itself got worse.

So the real question becomes: can you reduce X or Y enough to make AI clearly better?

YMMV, but in my case, yes.

The Secret Sauce

I took a mini-sabbatical in the summer of 2025, just when Claude Code launched. Before that, I'd used Cursor for about a year, and had had moderate success with its integrated agents, but wasn't confident enough to hand it anything complex.

When I returned to work in September, Claude Code had already taken off. I started using it, but was slightly disappointed. It wasn't the one-shot miracle people claimed it would be, although it definitely felt more solid than Cursor.

My assumption was that success required writing detailed CLAUDE.md files all over the codebase to give agents hints about how I want things done. The endless spec-writing and evaluation sounded both boring and temporary, because maybe CLAUDE.md files would be obsolete within a week.

It all changed when I discovered skills. More specifically superpowers. Superpowers drastically reduces the number of iterations (Y in the inequality above).

My workflow now looks like this:

1. Brainstorm

I start with a rough outline of the project or task and refine it by running /superpowers:brainstorm. The agent scans the repo and asks clarifying questions.

2. Write the plan

Once satisfied, I generate a plan document by running /superpowers:write-plan. The agent proposes approaches and alternatives, and I decide the direction while suggesting my own ideas. The resulting plan document is then used by the agent when writing the code.

3. Execute the plan

Then I run /superpowers:execute-plan to write the code according to the plan. For now, I don't let it commit anything without my approval — this way I can review everything it writes commit by commit.

4. Review

Finally, I open a draft PR and use Claude's /review and /code-review skills to review it. I resolve the feedback using superpowers' /receiving-code-review skill. Easy-peasy.

The output is now so good I generally don't need to touch it for more than a few tweaks here and there.

I've finally seen the light: this is the future of coding.

When I meet LLM-skeptical colleagues now, I sympathize with them. I used to be one of them.

But I won't join their ranks anymore. I probably can't convince them either; they'll have to experience it themselves.

All I can suggest is this: try Claude Code with superpowers.